The AI Paradigm

I've seen a lot of bad takes and vitriol over AI so here is mine:

As usual, there is nuance that the masses are too dense to see through.

I didn’t watch the Super Bowl but I heard from people on social media that most of the ads were for gambling and AI. A lot of people seem upset at that, and I’ll speak to the latter. One creator I really like made a short video highlighting the artistry of Bad Bunny’s halftime show to preface his disparaging of AI. “If AI was inevitable, they wouldn’t be forcing us to like it.” Other creators who make content primarily for a gaming audience highlight how datacenter buildouts have led to a shortage in RAM and GPUs that make PC gaming increasingly expensive and prohibitive. Residents of communities where datacenters are constructed are also noticing significant increases to their electricity bills. All the while, OpenClaw adopters are giving agents free reign over their data and systems. What a zeitgeist!

If you read the viral essay "Something Big is Happening," you saw an AI startup CEO write that he is "no longer needed for the actual technical work," sounding the alarm that now that the latest are greatest at coding, they'll soon be coming for jobs in every sector imaginable. Looking past his hyped up BS as a professional VC grifter, he has a point.

I see a ton of people online, from randos to celebs bitching about AI, that it's unethical if not evil, that it just sucks overall so people aren't using it. They're misled and misguided. The Rock Rebellion is happening and these people are deniers.

For those who are not aware, the Rock Rebellion is what I call the paradigm shift that is happening. The name comes from the yogapunk epic I am writing, wherein an AI-driven apocalypse creates a fractured world that can only be healed by uniting technology and nature, order and creativity. Frankly, it’s difficult to write because the world seems to change faster than my writing can keep up. For context, I started this project in late 2019.

Anyways, I think there are 2 main factors at play in Rock Rebellion denial.

1. Every tech goes through a hype cycle. We remember when mobile phones were hyped up, when the internet was hyped up. Gen Z has not really experienced a paradigm shift. They always had the internet and mobile phones. I don't think social media is itself a paradigm shift apart from the internet. In fact, I would argue that social media is just an application on top of the internet paradigm. Crypto and NFTs are a scam, not a breakthrough. AI is Gen Z’s first real gamechanger.

2. Mass consumers incorrectly assume that AI is consumer-grade chatbots. The real magic is happening behind the scenes in collabs between AI and other fields. The chatbots are loss leaders to attract investments which go towards infrastructure, and that will pay dividends for a long time. It's like how Amazon started as an online bookstore but today most of their profit comes from renting out cloud infrastructure to millions of businesses.

So while the AI CEO has an agenda of promotion, he's not wrong that this is going to drastically affect how we work. On the contrary, the most irksome takes come from gamers who are frustrated that the hardware supply chain that once catered to their premium consumption is now prioritizing compute infrastructure. As if the bubble pops and suddenly they're able to buy RAM and GPUs again. I feel like someone who can afford to throw g-notes at a pcmasterrace build should be able to identify a bottleneck.

The legal and ethical concerns are the most legitimate, IMO. No way around it. Courts said it’s fair use to train AI on copyrighted material, but the AI companies pirate said material. There are privacy and security risks associated with AI applications and agents, respectively. In typical tech company fashion, the frontier model providers try to save face by publicly complaining that they want regulation but they don’t really want regulation and lobby extensively to block regulations.

Environmental externalities are important, and we often see discussions of datacenters’ carbon emissions and water consumption. At the same time, I think it’s necessary to put this into perspective: AI infrastructure falls several orders of magnitude behind beef production. Why is nobody talking about this?! Oh yeah, the lobbyists… Here is a link to an AI-generated paper on the topic, synthesizing multiple research articles to comparatively analyze the digital and biological “metabolisms.” In short, a single serving of steak is equivalent to an entire year of AI use by an individual. Until the critics of AI commit to reevaluating their protein choices, theirs is selective outrage.

The energy externalities might be workable. I'm optimistic that AI can optimize power consumption via unutilized off-peak capacity. I'm pessimistic that lobbyists won't allow tech companies to cover any increase in residents' utility bills, which I would consider the just outcome. They will argue that datacenters create jobs, but that is almost a lie. While they do create jobs in construction, the operation of datacenters requires relatively small staffing. Given the decentralized nature of cloud computing, having a datacenter in your backyard does not create tech access. The benefits to the local community are almost nil. In the LA area, Monterey Park is one city that has discussed the possibility of building a datacenter, currently under a moratorium as they face resistance. I read that the city would get $5M in taxes plus some other incentives from the developer like a public park, but honestly that’s not worth it. I can’t think of a good reason to build these anywhere near urban or suburban areas.

Let’s talk about the AI bubble. By now we’ve all seen the circular funding chart that shows how Nvidia, Oracle, OpenAI, and others are throwing money around. Critics argue that they’re inflating their valuations without creating value. A crazy amount of investments are going into the AI companies with no clear path to ROI. Subscription models and advertisements give the appearance of an intention to profit, but as I said earlier, the products are loss leaders. It costs on the order of $1B to develop a new frontier AI model, including the various training runs and overhead. I’m skeptical that a subscription model will provide the necessary profit for OpenAI and Anthropic to ROI. Instead, I suspect key partnerships and collaborations will bring in the big bucks.

Consider that the three big cloud providers already back a horse in the AI race. Alphabet obviously backs itself (DeepMind). Amazon backs Anthropic. Microsoft backs OpenAI. It’s been like that for the last few years. Just as GCP, AWS, and Entra dominate cloud computing, so DeepMind, Anthropic, and OpenAI dominate frontier models. Sorry, Elon and Zuck, you’re too far behind. What this means is the three AI providers have a direct pipeline to datacenter infrastructure needed to power their frontier model development and deployment. When the AI bubble pops, they’ll take a hit but will survive. It’s hard to say the same for MetaAI and xAI. Certainly not the case for the myriad AI startups popping up that offer little more than a wrapper on top of an established model by the aforementioned providers; the CEO I mentioned at the top is no exception. In any case, the hardware infrastructure that the behemoths require will continue to be built out, albeit to a potentially lesser extent after the bubble pops. But just as network infrastructure continued to grow after the Dot Com Bubble, so too will cloud computing infrastructure after the AI Bubble. That means that hardware providers like Nvidia and Broadcomm, plus their supply chain including Micron, ASML, and Lam Research, will do alright.

From where come the dividends? As an example, my fellow Caltech alum Connor Coley at MIT published a paper last year presenting “PrexSyn, an efficient and programmable model for molecular discovery… setting a new state of the art in synthesizable chemical space coverage, molecular sampling efficiency, and inference speed.” While their model requires a vast amount of training data, it moves AI-driven chemistry one step forward towards feasible synthesis. This is promising because, so far, AI discovery has not yielded very many material results despite screening millions of structures in biology and materials science.

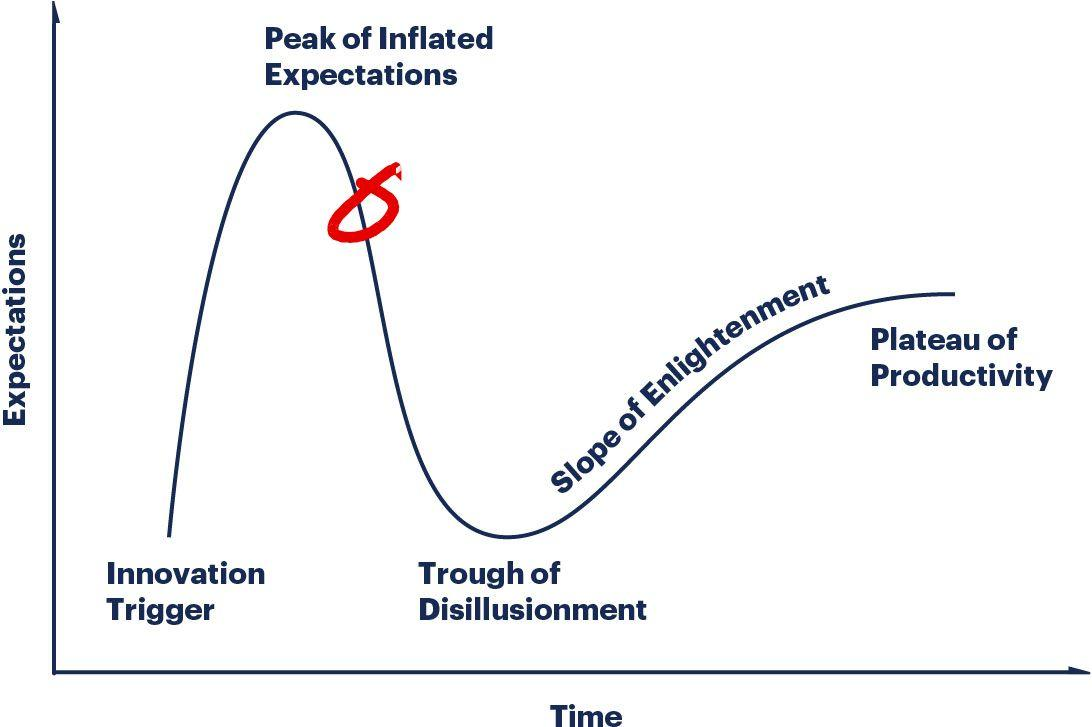

My mind floats to two concepts that describe technology trends and paradigms: the Gartner Hype Cycle and Thomas Kuhn’s The Structure of Scientific Revolutions, respectively. Each describes how perception changes over time. I think the reason I revisit these models is because current AI is both a technology following the hype cycle and a paradigm.

Gartner’s picture plots expectations over time with a sharp rise to a similarly sharp drop followed by a much more shallow rise and plateau. When the technology is entering the market, funding is easy because investors are hyped–until reality sinks in. If it survives the “trough of disillusionment” and climbs the “slope of enlightenment,” it becomes commercially viable and produces value under tempered expectations. The Gartner model is straightforward: clearly, today’s AI is early in the trough of disillusionment. Hype is still high but waning as early adopters’ expectations are not met and externalities are revealed. Assuming infrastructure buildout continues, surely we will climb the slope of enlightenment.

Kuhn’s model starts with “normal science,” when progress is made by scientists solving different puzzles within an established paradigm, a universally accepted reality consisting of accepted theories (example: medieval geocentrism). Normal science accumulates anomalies which the paradigm cannot explain; as these accumulate, there is a crisis that can only be resolved with extraordinary research by radical thinkers willing to discard the legacy institution. This is the revolution (example: heliocentrism). A new paradigm is proposed and debated and eventually adopted with intense yet decaying resistance. As the new paradigm becomes the institution, the next generation learns it and practices normal science within it.

Kuhn’s model applies to big paradigm shifts and small paradigm shifts, and the two are related. Like how the discovery of X-rays, which was only a big deal to optical researchers, bridged the truly revolutionary paradigms of Maxwell and Einstein. In the big picture, small shifts that only affect how science is done eventually add up in the crisis that brings about the revolution in how we perceive reality.

The last big paradigm shift in artificial intelligence was AlexNet in 2012 when Hinton’s team used GPUs and kicked off the deep learning revolution. Smaller shifts including the transformer architecture, diffusion models, and massive scaling, while remarkable engineering, are adding up to a crisis. Improved performance, memory, and reasoning somewhat resemble the epicycles added to solve the retrograde anomaly in the geocentric model. Meanwhile normal science is happening in biology, physics, and other fields, assisted with deep learning in many cases. Current AI is great at exploring a parameter space and selecting candidates that might work, but the physical world is the ultimate judge. The anomalies are piling up.

Fundamentally, normal science within this paradigm is based on brute force. Deep learning is probabilistic enabled by massive matrix calculations in parallel processing. This is why throwing more data yields better results. But we are running out of data to continue scaling, even with infrastructure building out. Nevertheless, I am optimistic that, even when we reach a new paradigm, the compute infrastructure will deliver exceptional long-term value.

At the risk of concluding with a cliche, these are truly interesting times. AI is driving an unprecedented buildout of computing infrastructure that is sure to pay dividends for years to come across the sciences. The negative externalities are significant on their own yet small compared to those of the beef that consumers happily scarf down. And consumer-grade AI is marketing; the sciences are where money is but over a long horizon. Investors who bought into the BS of B2B SaaS in the 2010s and now expect a short-term ROI on AI are losers. Deep learning is a new institution that has disrupted the legacy SaaS institution, yet this is far from over. The next paradigm is coming, maybe already even extant.